Abstract

Despite the inherent advantages of TIR imaging, large-scale data collection and annotation remain a major bottleneck for TIR-based perception. A practical alternative is to synthesize pseudo-TIR data via image translation; however, most RGB-to-TIR approaches heavily rely on RGB-centric priors that overlook thermal physics, yielding implausible heat distributions. In this paper, we introduce TherA, a controllable RGB-to-TIR translation framework that produces diverse and thermally plausible images at both scene and object level. TherA couples TherA-VLM with a latent-diffusion-based translator. Given a single RGB image and a user-prompted condition pair, TherA-VLM yields a thermal-aware embedding that encodes scene, object, material, and heat-emission context reflecting the input scene-condition pair. Conditioning the diffusion model on this embedding enables realistic TIR synthesis and fine-grained control across time of day, weather, and object state. Compared to other baselines, TherA achieves state-of-the-art translation performance, demonstrating improved zero-shot translation performance up to 33% increase averaged across all metrics.

Translated Images - Interactive Images

Move the cursor to compare RGB (left) and TIR (right) images.

Controllability Panel: Click buttons to switch TIR images under different weather conditions.

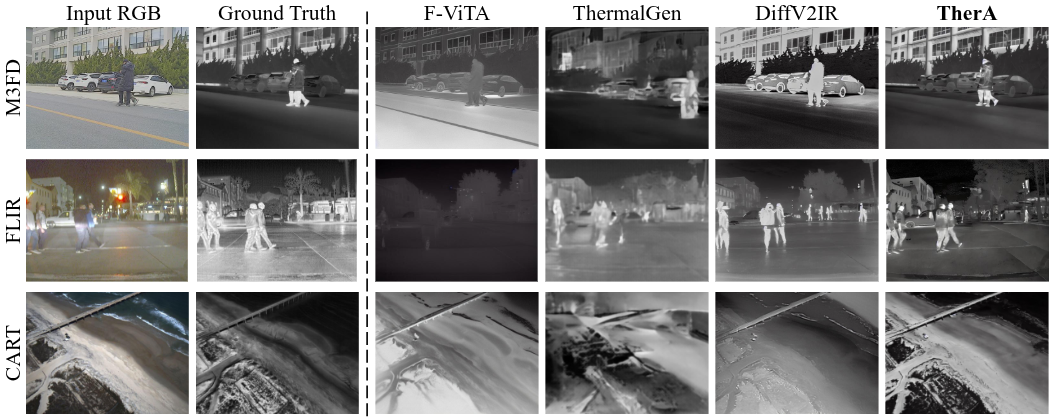

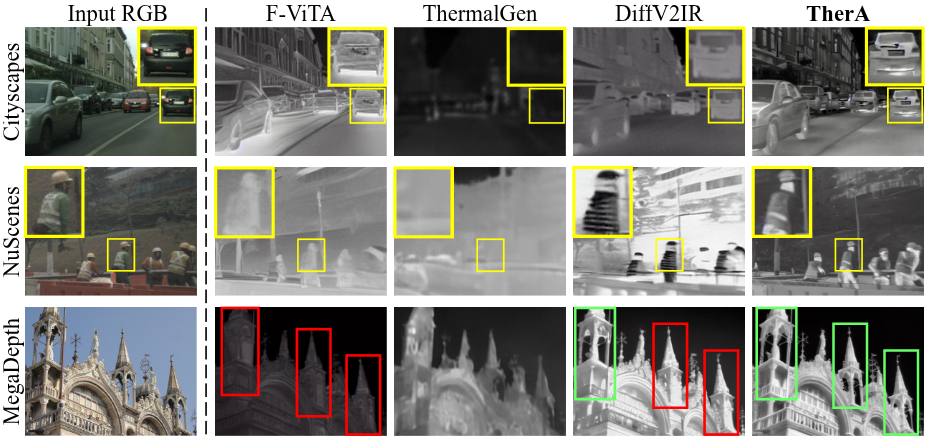

Baseline Results

Zero-shot RGB-to-TIR translation baseline results comparison on RGB-TIR dataset.

Zero-shot RGB-to-TIR translation baseline results comparison on RGB-only dataset.

Zero-shot RGB-to-TIR translation baseline results comparison on RGB-only dataset.

Contact

Please file an issue on Github for assistance, or contact donkeymouse@snu.ac.kr